Introducing STEVE NASH, our 2012 Projection Model.

Hello, all. Today is going to mark, for us, the official rollout of the Gothic Ginobili preseason projection model. I made the model, Alex came up with the name — “SRS-Tempered Evaluation of Variable Elucidation; Not A Simple Hyper-Segmented Linear Regression.” Which… is an acronym for STEVE NASH, if you hadn’t noticed already. Yep. It’s either the best model acronym ever, or the worst. Try saying the model name out loud. It’s hilarious, and I can’t stop laughing. But it’s memorable, reasonably descriptive, and honors one of the Gothic’s favorite players. So… I guess it’ll suffice, for now? Regardless. What is STEVE NASH? It’s a model that attempts to use prior data project out what we should expect to happen in the 2012 season. I would never call it a prediction model, for reasons I’ll explain in the introduction, but it offers decent projections of what to expect based on prior data. Come with me on a journey through the seedy world of model fitting, setting your priors, and managing expectations. Let’s meet STEVE NASH, together.

• • •

I. INTRODUCTION

For access to the initial predictions, please see the spreadsheet.

In building a projections model (as opposed to a strict predictions model; see the next paragraph), there were a few things I tried to accomplish. The first? I wanted to ensure that the results were comprehensible, reasonable, and easy to use as a prior base to help establish our weekly projections we’re going to start putting out next week. I wanted to have a single predicted variable, one that we could then turn into wins, losses, and probabilities that various teams make the playoffs. So I created a model that would predict — based on past data and a few sparing summary statistics for the team’s current season — the SRS rating of each NBA team entering the 2012 season. I didn’t want to predict wins, because teams win more or less than their true quality all the time (how many games hinge on an uncharacteristic fluke or a single shot?), and wins aren’t a continuously distributed variable in the same way SRS is (the Pacers can’t have 29.3 wins, suffice it to say). Still, SRS is nice for many reasons, and the simplicity and clarity is what make it my predictor of choice. All SRS does is take the team’s margin of victory and add the team’s strength of schedule, to downweight the rating of teams who played weaker schedules and upweight the rating of teams who played stronger ones. For the lowdown on SRS — including how to use it to predict spread and favorites in games (a fringe prediction we can make with this model) — I’d prefer to redirect you to this great post at Basketball Reference on the subject (and, if you’re more mathematically inclined, this more technical intro is very cool).

While it’s true that I’m predicting future SRS, this isn’t really a prediction model. Most people muddy the difference between a projection and a prediction when they discuss model output. But they’re very different animals: A projection is an attempt to put together all the information we currently have into a numerical summary of what we already know. A prediction, on the other hand, is speculation about things we can’t possibly know. My go-to article to link in explaining the difference between the two is this excellent FanGraphs post by Dave Cameron). In creating a projections model, I’m essentially attempting to create a simple ranking of how good we can expect certain teams to be. I realize that the projections my model spits out aren’t going to be very good predictions — by design, this model regresses to the mean (making the extremely good/bad teams this season look like middling .650/.350 teams) and assigns a high standard deviation to each team’s SRS. It uses prior data to try and find the teams that are poised to make serious leaps, or take a serious spell. It tries to project the best team in the league. Et cetera, et cetera.

The projected wins totals aren’t going to hold up after a full season — one or many teams are going to get hot and end with 44+ wins, even if the current best team is only projected to win 40. There are some teams we can be virtually positive these playoff probabilities underrate (I’d put Miami and OKC at a 100% shot of making playoffs). And there are some teams that this model definitely underrates (Portland at 66%? New Jersey at 4%? Dallas at 20%?). But on the whole, the model gives a reasonable expectation based on seasons past. To test the model, I used two years (1999 and 2011) as a holdout sample and ran the model process on every other season in our data (1993-2010) to ensure it was giving us proper coverage. The results for those years had the same feel of the results for this year — primarily conservative mean-regressed predictions, one or two big leaps, one or two big falls, and enough creativity in its projections that it brings something new to the table. One thing to keep in mind while you peruse these projections: these are based in no way on the current season data. That will come into play in 2 or 3 days — for now, these projections are based only on data available to us prior to the first day of the season. This model is only a prior: As the season goes on, we’ll be weighting the projections against the new, actual SRS data of the season.

• • •

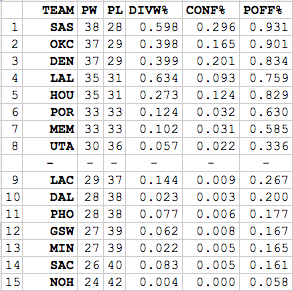

II. THE LOWDOWN OUT WEST

STEVE NASH sees the stage as pretty wide open out west. There are five teams that the model places as a greater than 7.5 percent shot at winning the west in the regular season — the Spurs (29.6%), Thunder (16.5%), Nuggets (20.1%), Rockets (12.4%), and Lakers (9.3%). Of these, I’d caution that the Spurs have more downward momentum than the model assesses (especially with the short leash Pop is going to give the Spurs’ vets this season to try and keep them playoff-fresh), and I’d drop them and the Nuggets a few points to raise the Thunder a touch (if I were making personal predictions). The model is low on the Thunder’s potential to improve, as well, which I found interesting. It essentially sees the Thunder and the Nuggets as a tossup to win their division, with the post-Melo Nuggets ending up with a lower SRS but a higher variance in their final result (which leads to them leading the West more often than the Thunder in the projections).

You may look at that and wonder about the omission of the Mavericks. Well, I did too, until I noticed exactly how far the model predicted they’d fall. In a word? Wow. Without question, the model’s biggest prediction and biggest reach is in predicting that the 2012 Mavericks are going to plumment this season, falling all the way from the eighth best SRS in the league down to an SRS of -2.103. Because that’s an extremely interesting prediction (considering that these predictions come using exactly zero of the games currently played as data), I went deep into the model to try and figure out the true drivers of that prediction. The root of the Mavs problems? Teams with little depth that rely on one or two efficient players don’t project well in this segment. Neither do old teams, and the 2011 Mavs are one of the oldest teams in this sample. The Mavs are incredibly old, as I covered in my Mavs preview — it’s not necessarily a death knell for a roster to be old, but for teams in this segment, it kind of is. This all said, I have to stare at that and think they’ll get better. I don’t think they’re a title contender, but they’re not nearly as bad as the 2011 Warriors or Clippers, two teams in the general range of the predicted SRS for the 2012 Mavericks (-2.103). I expect the model will be proven wrong here.

Other points of contention? I think the Blazers, Clippers, and Thunder will be better than the model does. I think the Jazz, Suns, and Kings will be worse. They’re reasonable projections all around, despite my disagreements, but I expect this model will have one or two teams make it look somewhat silly out West, highlighted by the Mavericks. Which is fine, all things considered — I’d rather have a model that makes mistakes and learns from them than one that takes the easy choice every single time. You’re an OK guy, STEVE NASH.

• • •

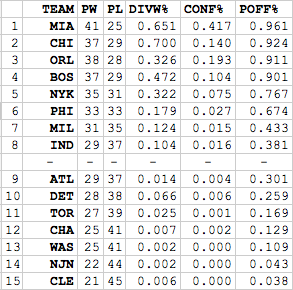

III. THE LOWDOWN OUT EAST.

Well, what else was it going to say, right? The Heat are allotted a 42% chance of winning out the east, and are essentially assured of making the playoffs. Behind them, the Magic have a puncher’s chance at 19%, the Bulls have a long but decent shot at repeating their best-in-class 2011 (14%), and the Celtics and Knicks are distant wildcards at 10.4% and 7.5% apiece. Unlike the no-dominant-team West, the Heat fit the bill out east, completely destroying their division and The entire playoff picture is relatively clear, though I think the Pacers may supplant the Celtics in the top 4. In fact, scratch the “may”. I’m pretty sure they will. So I very much disagree with my model on that one. But I respect its right to have such an opinion, because I am a respectful man. There aren’t as many points of contention in these projections, I don’t think — I’m surprised the Nets are so low, but then I looked at their roster and was less surprised. It’s going to take more than Deron Williams alone to make that roster into a contender.

• • •

IV. APPENDIX: STATISTICAL METHODOLOGY

For more details of the statistical methodology, see the appendix here.

• • •

This concludes today’s rollout of the model. In 3 or 4 days, we’ll post our first set of weekly power rankings that combine this season’s data with STEVE NASH in order to create updating projections of win totals and probabilities throughout the season. Until then? Have fun with today’s games, and have a happy New Year. Stay safe — only a fool drives drunk, and there’s a lot of fools afoot on New Years.